Lecturer: Dr Maria Nikodemou

This course introduces parallel programming using distributed memory message-passing and the MPI (Message Passing Interface) standard. It covers the properties of the computing model, and the basic facilities of the MPI standard. No prior knowledge of parallel programming is assumed. The goal is to teach all of the facilities that are used in most MPI codes that are developed and used in scientific and engineering research organisations, and to be able to refer to the MPI standard on specific points or to use other facilities.

Students should leave the course knowing:

- The memory and process model for MPI programs.

- How to write, compile, and run a simple MPI program.

- How to send messages between pairs of processes.

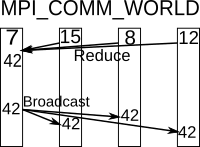

- How to communicate information between all processes simultaneously.

- Differences between buffered and unbuffered communications and the performance and correctness implications.

- Typical ways of decomposing data across multiple MPI processes.

- How to debug and tune MPI programs.

The topics are likely to include:

- Principles of message-passing

- Basics of the MPI interface

- Datatypes and collectives

- Point-to-point transfers

- Error handling

- Communicators and process groups

- Topologies

- Composite types and language standards

- Attributes and I/O

- Debugging, performance and tuning

- Problem decomposition

Prerequisites:

The ability to program in at least one of Fortran, C or C++, including familiarity with editing, compilation, debugging and running programs, preferably under a Unix-like system.

Literature:

Parallel Programming with MPI, P. Pacheco, Morgan Kaufmann, 1997.